Proxy Package Up to75% OFF+15% Bonuswith

Web scraping is a computerized process used to gather substantial volumes of data from websites. It's also commonly referred to as web data extraction or web data scraping.

Web scraping requires two parts - a crawler and a scraper.

Indeed, if you've ever duplicated and inserted data from a website, you've essentially executed the same task as a web scraper. The only difference is that you finished the data scraping manually.

Although web scraping can be done manually, in most cases, automated tools are preferred when scraping web data because they cost less and work faster.

Web scraping uses machine learning and intelligent automation to retrieve hundreds, millions, or even billions of extracted data points from the seemingly endless boundaries of the internet.

However, it should be noted that it is inevitable to encounter website blocks and captchas when doing web scraping.

Easily recognize CAPTCHAs and unblock for seamlessly web scraping.

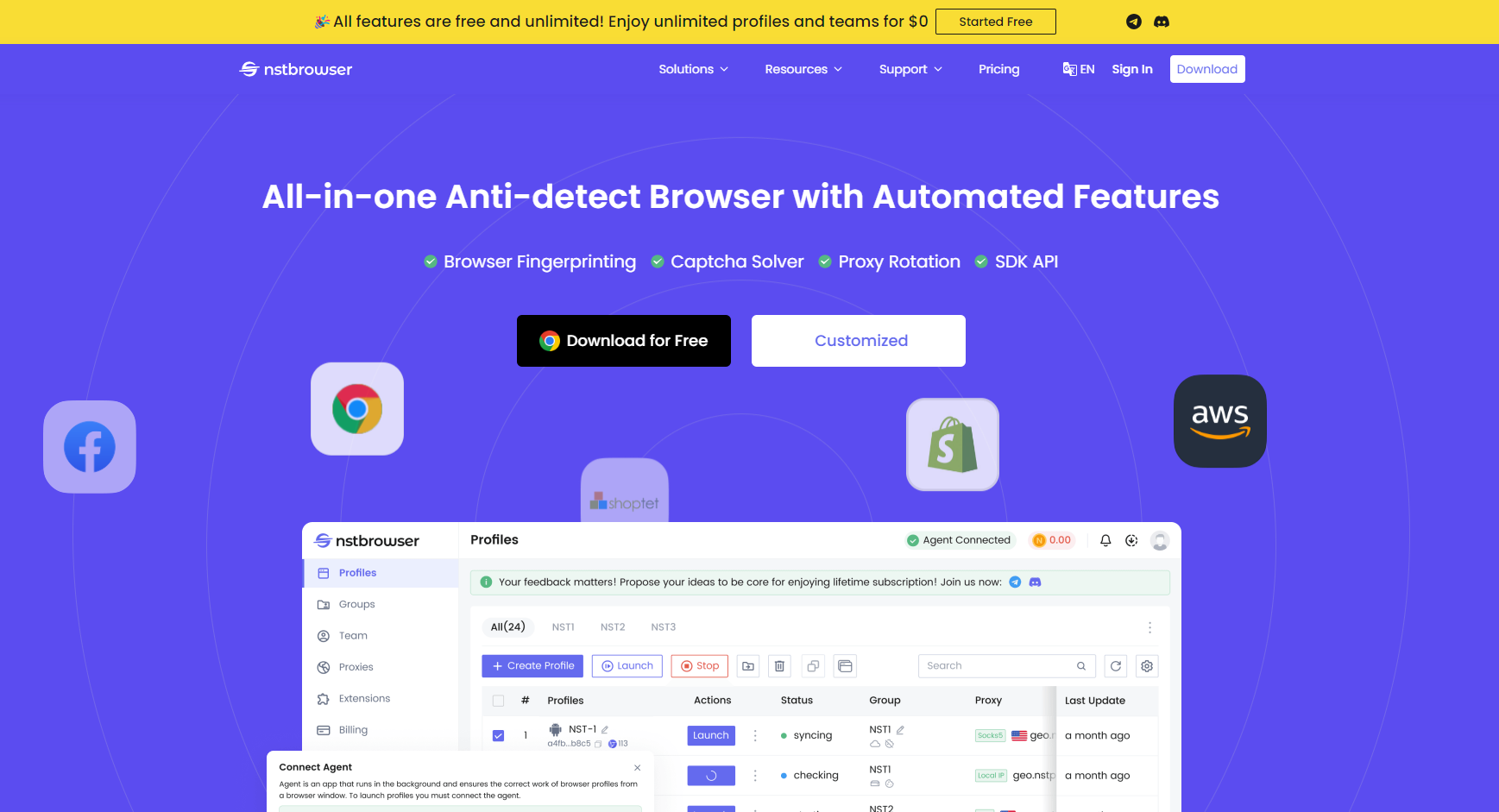

Start to use Nstbrowser Freely now!

Do you have any wonderful ideas and doubts about web scraping and Browserless?

Let's see what other developers are sharing on Discord and Telegram!

Here, we take a better-understood approach: the ox and the plow.

The crawler plays the role of the ox, guiding the scraper (a.k.a. the plow) in our digital realm.

That is, the crawler will guide the scraper through the Internet extracting the required data as if it were a manual operation.

A web crawler, sometimes referred to as a "spider", is the basic program that browses the web and searches and indexes content.

It browses the Internet by clicking on links and exploring to index and search for content. In many programs, you first "crawl" the web or a specific site to discover URLs, which are then passed to the scraper.

Web scraper is a specialized tool designed to accurately and quickly extract data and relevant information from web pages. Web data scraper varies greatly in design and complexity, depending on the project.

So how does a web scraper work? The process of it seems relatively simple, but it's actually a little complicated. After all, websites are built for humans, not machines.

When a web scraper needs to crawl a website:

Typically, the user will need to select the specific data they want from the page. In other words, you just want to crawl Amazon product pages for price and model numbers but are not necessarily interested in product reviews.

In most cases, the web scraper will output the data to a CSV or Excel spreadsheet, while more advanced ones will support other formats, such as API-ready JSON.

Just like building a website, anyone can also build their own web scraper. But it requires some advanced programming knowledge. If you want a more effective crawler, then you need deeper programming knowledge.

The opposite of self-built is pre-built web scrapers, which generally have customizable advanced options. You just need to download and run them easily. Scrape scheduling, JSON, and Google Sheets exports are all pre-built web scrapers.

A browser extension is a program like an application that can be added to your browser, such as Google Chrome or Firefox. The good thing about this kind of scraper is that it integrates with your browser, so it's very easy to run and operate.

However, any advanced features that are beyond the scope of your browser won't work on the browser extension. This means that IP rotation is not possible when using it.

Nstbrowser does IP rotation intelligently, unblocking websites effortlessly!

Try for FREE now!

Although computer software scrapers are not as convenient as extensions, they are not limited by what browsers can and cannot do.

Since they can be downloaded and set up on your computer, they are more complex than web scrapers that operate within a browser. However, they also possess sophisticated features that are not confined by the limitations of a browser.

User interface web scraper is a web scraping tool that includes a user-friendly interface. Users can enter URLs, set parameters, and view results without having to write code directly. These web scrapers are generally easier to use for most people with limited technical knowledge.

A local web crawler will run on your computer using its resources and internet connection. This means that if your scraping behavior requires high CPU and RAM performance, your computer may become very slow while running the scraper.

To avoid this trouble, there is a cloud web scraper.

The cloud web scraper extracts data from websites without using your computer's resources. This helps your computer focus on other tasks.

What are your customers doing? What about your potential customers? How does your competitor's pricing compare to yours?

Quality data captured on the website can be very helpful to a company in analyzing consumers and planning which direction the company should take in the future.

Nothing is more valuable than staying informed. From monitoring reputations to tracking industry trends, web scraping is an invaluable tool for staying informed. Information needs to be tracked and synchronized with web scraping technology.

How to do web scraping efficiently and easily? How do we avoid website blocking and CAPTCHA recognition? How do we minimize the cost of scraping websites?

Nstbrowser can solve all your troubles!

High-quality data scraping. As an anti-detect browser, Nstbrowser offers state-of-the-art infrastructure, talented developers, and extensive experience to ensure that there is no missing or incorrect data.

Completely unblock websites. Nstbrowser has the most comprehensive website unblocker program. It can easily unblock websites with Web Unblocker, Captcha Solver, Intelligent IP Rotation, and Premium Proxies, guaranteeing seamless web scraping.

Free to use. Nstbrowser is now a completely free fingerprint browser. Simply download and log in to experience unlimited Profiles and unlimited environment configurations.

Legal compliance. You may not know all the do's and don'ts of web scraping, but a counter-inspection service provider with an in-house team of legal professionals certainly does. Nstbrowser will make sure that you are always compliant.

It was mentioned above to ensure the legality of web scraping. So, is the web scraping activity itself legal?

In short, the act of web scraping is not illegal and there is no specific law against web scraping.

However, there are some rules you need to follow. In some cases, web scraping may violate other laws or regulations, thus making web scraping illegal.

For example:

Many websites provide specialized API interfaces for developers to fetch data. APIs are usually more stable and efficient than web crawling, and place less of a burden on the web server.

So, before developing a scraper, find out if the target website provides an API interface and check the API documentation. If the API meets the demand, prioritize the use of API to get data.

Terms of service usually contain the provisions of the website on data usage and data collection. Violation of these terms may result in legal issues or banning.

Carefully read the terms of service of the target website before performing data scraping. If the terms explicitly prohibit crawling, do not perform the scraper.

The robots.txt file instructs web scrapers on which pages can be scraped and which pages cannot. Although robots.txt is not a legal document, respecting it is a form of netiquette.

When writing a scraper, first check and parse the robots.txt file of the target website. You can use a robots.txt parsing library to do this automatically.

The crawled data may be protected by copyright. Using or publishing this data may violate copyright laws.

So, confirming the copyright status of crawled data is super important before using or distributing it. If the data is copyrighted, obtain written permission from the copyright owner before using or distributing it.

Great! Now that you know all the basics of web scraping, what is the best web scraper for you?

We highly recommend Nstbrowser.

Not only is it free to download and use, but it comes with a very powerful set of features: