Proxy Package Up to75% OFF+15% Bonuswith

Using Node.js for web scraping is a common need, whether you want to gather data from a website for analysis or display it on your own site. Node.js is an excellent tool for this task.

By reading this article, you will:

Node.jsWeb scraping is the process of extracting data from websites. It involves using tools or programs to simulate browser behavior and retrieve the needed data from web pages. This process is also known as web harvesting or web data extraction.

Web scraping has many benefits, such as:

"Node.js" is an open-source, cross-platform JavaScript runtime environment that executes JavaScript code on the server side. Created by Ryan Dahl in 2009, it is built on Chrome's V8 JavaScript engine. Node.js is designed for building high-performance, scalable network applications, especially those that handle a large number of concurrent connections, like web servers and real-time applications.

Node.js is a popular JavaScript runtime environment that allows you to use JavaScript for server-side development. Using Node.js for web scraping offers several advantages:

Without further ado, let's get started with data scraping with Node.js!

First, download and install Node.js from its official website. Follow the detailed installation guides for your operating system.

Node.js offers many libraries for web scraping, such as Request, Cheerio, and Puppeteer. Here, we'll use Puppeteer as an example. Install Puppeteer using npm with the following commands:

mkdir web-scraping && cd web-scraping

npm init -y

npm install puppeteer-coreCreate a file, such as index.js, in your project directory and add the following code:

goTo method is used to open a webpage. It takes two parameters: the URL of the webpage to open and a configuration object. We can set various parameters in this object, such as waitUntil, which specifies to return after the page has finished loading.waitForSelector method is used to wait for a selector to appear. It takes a selector as a parameter and returns a Promise object when the selector appears on the page. We can use this Promise object to determine if the selector has appeared.content method is used to get the content of the page. It returns a Promise object, which we can use to fetch the content of the page.page.$eval method is used to get the text content of a selector. It takes two parameters: the first is the selector, and the second is a function that will be executed in the browser. This function allows us to retrieve the text content of the selector.const puppeteer = require('puppeteer');

async function run() {

const browser = await puppeteer.launch({

headless: false,

ignoreHTTPSErrors: true,

});

const page = await browser.newPage();

await page.goto('https://airbnb.com/experiences/1653933', {

waitUntil: 'domcontentloaded',

});

await page.waitForSelector('h1');

await page.content();

const title = await page.$eval('h1', (el) => el.textContent);

console.log(title);

await browser.close();

}

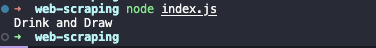

run();Run index.js with the following command:

node index.jsAfter running the script, you will see the output in the terminal:

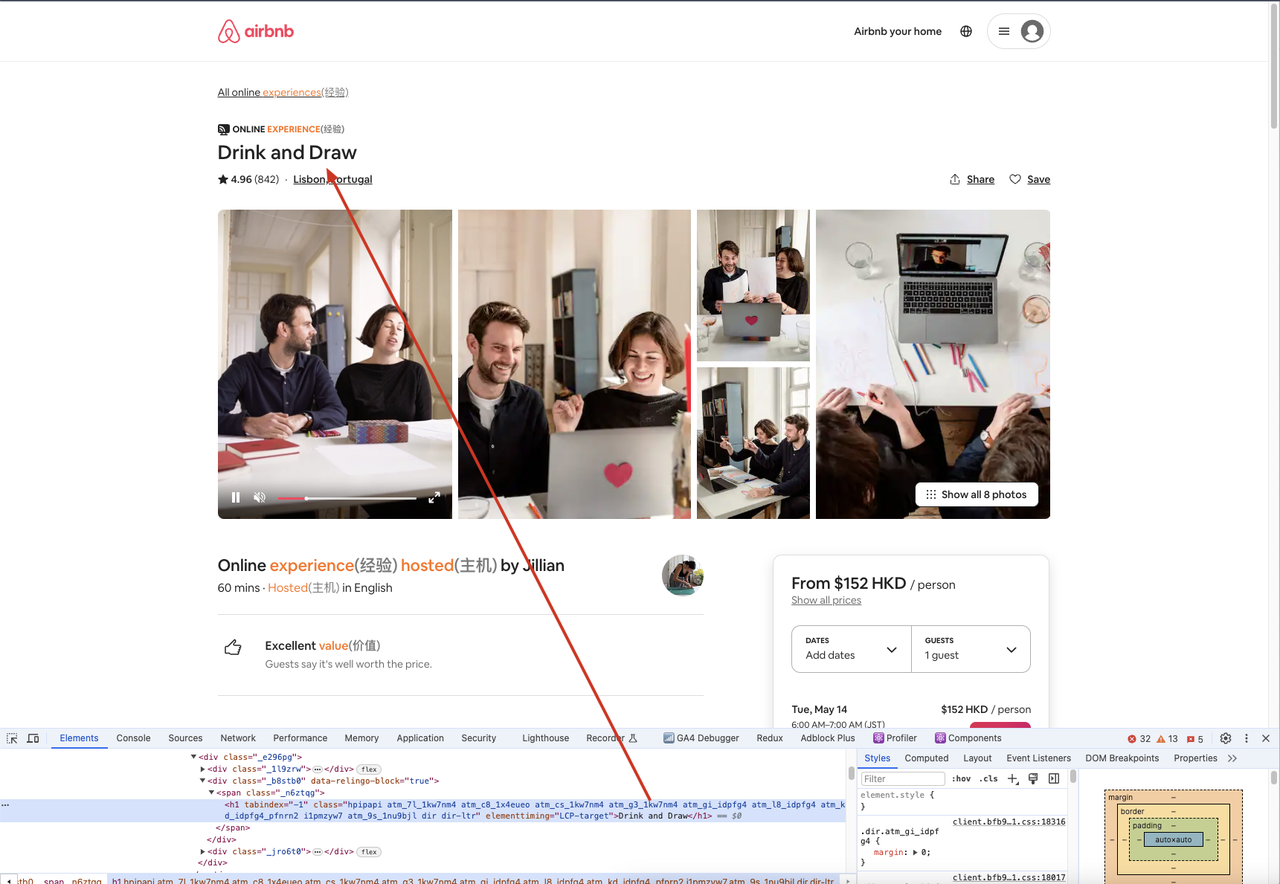

In this example, we use puppeteer.launch() to create a browser instance, browser.newPage() to create a new page, page.goto() to open a webpage, page.waitForSelector() to wait for a selector to appear, and page.$eval() to get the text content of a selector.

Additionally, we can go to the crawled site via a browser, open the developer tool, and then use the selector to find the element we need, comparing the element content to what we get in the code to ensure consistency.

While using Puppeteer for web scraping, some websites may detect your scraping activity and return errors like 403 Forbidden. To avoid detection, you can use various techniques such as:

These methods help bypass detection, allowing your web scraping tasks to proceed smoothly. For advanced anti-detection techniques, consider using tools like Nstbrowser - Advanced Anti-Detect Browser.